Label Studio is an open-source data labeling tool that helps you create high-quality datasets for various machine learning tasks. It supports a wide range of data types, including images, text, audio, and video. . This article focuses on setting up Label Studio and using it for two common tasks: image labeling and text classification. We’ll walk through installation, configuration, real-world use cases, and suggest datasets for practice.

What is Label Studio?

Label Studio is a versatile tool for data annotation, allowing users to label data for tasks like object detection, image classification, text classification, and more. It provides a web-based interface to create projects, define labeling tasks, and collaborate with annotators. Its flexibility makes it ideal for machine learning practitioners, data scientists, and teams preparing datasets for AI models.

Key features:

- Supports multiple data types (images, text, audio, etc.)

- Customizable labeling interfaces

- Collaboration tools for teams

- Export options for various machine learning frameworks (e.g., JSON, CSV, COCO, etc.)

Getting Started with Label Studio

Installation

The easiest way to get Label Studio up and running is via pip. You can open a terminal and run:

pip install label-studioAfter installation, launch the Label Studio server:

label-studioThis starts a local web server at http://localhost:8080. Open this URL in a web browser to access the Label Studio interface.

As an alternative you can opt for Docker installation:

- Install Docker: If you don’t have Docker installed, follow the instructions on the official Docker website: https://docs.docker.com/get-docker/

- Pull and Run Label Studio Docker Image: Open your terminal or command prompt and run the following commands:

docker pull heartexlabs/label-studio:latest

docker run -it -p 8080:8080 -v $(pwd)/mydata:/label-studio/data heartexlabs/label-studio:latestdocker pull heartexlabs/label-studio:latest:Downloads the latest Label Studio Docker image.-it: Runs the container in interactive mode and allocates a pseudo-TTY.-p 8080:8080: Maps port 8080 of your host machine to port 8080 inside the container, allowing you to access Label Studio in your browser.-v $(pwd)/mydata:/label-studio/data: Mounts a local directory namedmydata(or whatever you choose) to/label-studio/datainside the container. This ensures your project data, database, and uploaded files are persisted even if you stop and remove the container.

3. Access Label Studio: Open your web browser and navigate to http://localhost:8080. You’ll be prompted to create an account.

Basic Workflow in Label Studio

Once logged in, the general workflow involves:

- Creating a Project: Click the “Create Project” button.

- Data Import: Upload your data (images, text files, CSVs, etc.) or connect to cloud storage.

- Labeling Setup: Configure your labeling interface using a visual editor or by writing XML-like configuration. This defines the annotation types (bounding boxes, text choices, etc.) and labels.

- Labeling Data: Start annotating your data.

- Exporting Annotations: Export your labeled data in various formats (JSON, COCO, Pascal VOC, etc.) for model training.

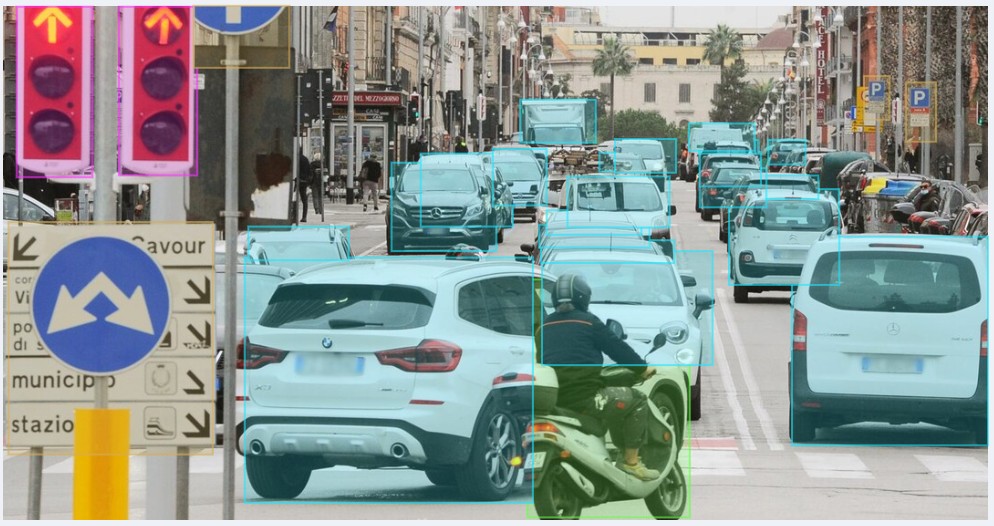

Image Labeling: Object Detection with Bounding Boxes

Real-Case Application: Detecting defects in manufactured products, identifying objects in autonomous driving scenes, or recognizing medical anomalies in X-rays.

Example: Defect Detection in Circuit Boards

Let’s imagine you want to train a model to detect defects (e.g., solder bridges, missing components) on circuit boards.

- Create a Project:

- From the Label Studio dashboard, click “Create Project”.

- Give your project a name (e.g., “Circuit Board Defect Detection”).

- Import Data:

- For practice, you can use a small set of images of circuit boards, some with defects and some without. You can find free image datasets online (see “Suggested Datasets” below).

- Drag and drop your image files into the “Data Import” area or use the “Upload Files” option.

- Labeling Setup (Bounding Box Configuration):

- Select “Computer Vision” from the left panel, then choose “Object Detection with Bounding Boxes”.

- You’ll see a pre-filled configuration. Here’s a typical one:

<View>

<Image name="image" value="$image"/>

<RectangleLabels name="label" toName="image">

<Label value="Solder Bridge" background="red"/>

<Label value="Missing Component" background="blue"/>

<Label value="Scratch" background="yellow"/>

</RectangleLabels>

</View><Image name="image" value="$image"/>: Displays the image for annotation.$imageis a placeholder that Label Studio replaces with the path to your image.<RectangleLabels name="label" toName="image">: Defines the bounding box annotation tool.nameis an internal ID, andtoNamelinks it to theimageobject.<Label value="Solder Bridge" background="red"/>: Defines a specific label (e.g., “Solder Bridge”) with a display color. Add as many labels as you need.

Click “Save” to apply the configuration.

4. Labeling:

- Go to the “Data Manager” tab.

- Click “Label All Tasks” or select individual tasks to start labeling.

- In the labeling interface:

- Select the appropriate label (e.g., “Solder Bridge”) from the sidebar.

- Click and drag your mouse to draw a bounding box around the defect on the image.

- You can adjust the size and position of the bounding box after drawing.

- Repeat for all defects in the image.

- Click “Submit” to save your annotation and move to the next image.

Text Classification: Sentiment Analysis

Use Case: Sentiment Analysis for Customer Reviews

Sentiment analysis involves classifying text (e.g., customer reviews) as positive, negative, or neutral. This is useful for businesses analyzing feedback or building recommendation systems. Label Studio supports text classification tasks with customizable labels.

Example: Movie Review Sentiment Analysis

Let’s classify movie reviews as “Positive”, “Negative”, or “Neutral”.

- Create a Project:

- Click “Create Project” on the dashboard.

- Name it “Movie Review Sentiment”.

- Import Data:

- For practice, you’ll need a CSV or JSON file where each row/object contains a movie review.

- Example CSV structure (

reviews.csv):

id,review_text

1,"This movie was absolutely fantastic, a must-see!"

2,"It was okay, nothing special but not terrible."

3,"Terrible acting and boring plot. Avoid at all costs."- Upload your

reviews.csvfile. When prompted, select “Treat CSV/TSV as List of tasks” and choose thereview_textcolumn to be used for labeling.

3. Labeling Setup (Text Classification Configuration):

- Select “Natural Language Processing” from the left panel, then choose “Text Classification”.

- The configuration will look something like this:

<View>

<Text name="review" value="$review_text"/>

<Choices name="sentiment" toName="review" choice="single" showInline="true">

<Choice value="Positive"/>

<Choice value="Negative"/>

<Choice value="Neutral"/>

</Choices>

</View><Text name="review" value="$review_text"/>: Displays the text from thereview_textcolumn for annotation.<Choices name="sentiment" toName="review" choice="single" showInline="true">: Provides the classification options.choice="single"means only one option can be selected.<Choice value="Positive"/>: Defines a sentiment choice.

Click “Save”.

4. Labeling:

- Go to the “Data Manager” tab.

- Click “Label All Tasks”.

- Read the movie review displayed.

- Select the appropriate sentiment (“Positive”, “Negative”, or “Neutral”) from the choices.

- Click “Submit”.

Suggestions on Data Sets to Retrieve Online for Free for Data Annotators to Practice

Practicing with diverse datasets is crucial. Here are some excellent sources for free datasets:

For Image Labeling:

- Kaggle: A vast repository of datasets, often including images for various computer vision tasks. Search for “image classification,” “object detection,” or “image segmentation.”

- Examples: “Dogs vs. Cats,” “Street View House Numbers (SVHN),” “Medical MNIST” (for simple medical image classification).

- Google’s Open Images Dataset: A massive dataset of images with bounding box annotations, object segmentation masks, and image-level labels. While large, you can often find subsets.

- COCO (Common Objects in Context) Dataset: Widely used for object detection, segmentation, and captioning. It’s a large dataset, but you can download specific categories.

- UCI Machine Learning Repository: While not primarily image-focused, it has some smaller image datasets.

- Roboflow Public Datasets: Roboflow hosts a large collection of public datasets, many of which are already pre-processed and ready for various computer vision tasks. You can often download them in various formats.

For Text Classification:

- Kaggle: Again, a great resource. Search for “text classification,” “sentiment analysis,” or “spam detection.”

- Examples: “IMDB Movie Reviews” (for sentiment analysis), “Amazon Reviews,” “Yelp Reviews,” “SMS Spam Collection Dataset.”

- Hugging Face Datasets: A growing collection of datasets, especially for NLP tasks. They often provide pre-processed versions of popular datasets.

- Examples: “AG News” (news topic classification), “20 Newsgroups” (document classification), various sentiment analysis datasets.

- UCI Machine Learning Repository: Contains several text-based datasets for classification.

- Stanford Sentiment Treebank (SST): A classic dataset for fine-grained sentiment analysis.

- Reuters-21578: A collection of news articles categorized by topic.

Tips for Finding and Using Datasets

- Start Small: Begin with smaller datasets to get comfortable with Label Studio before tackling massive ones.

- Understand the Data Format: Pay attention to how the data is structured (e.g., individual image files, CSV with text, JSON). This will inform how you import it into Label Studio.

- Read Dataset Descriptions: Understand the labels, categories, and potential biases within the dataset.

- Preprocessing: Sometimes, you might need to do some light preprocessing (e.g., renaming files, organizing into folders) before importing into Label Studio.

By following this tutorial and practicing with these free datasets, you’ll gain valuable experience in data labeling with Label Studio for both image and text-based machine learning applications.

For further exploration:

- Check the Label Studio Documentation for advanced features like machine learning integration.

- Join the Label Studio community on GitHub or their Slack channel for support.

Share your experience and progress in the comments below!